How AI Changed My Coding Workflow: Real Examples That Boosted My Productivity

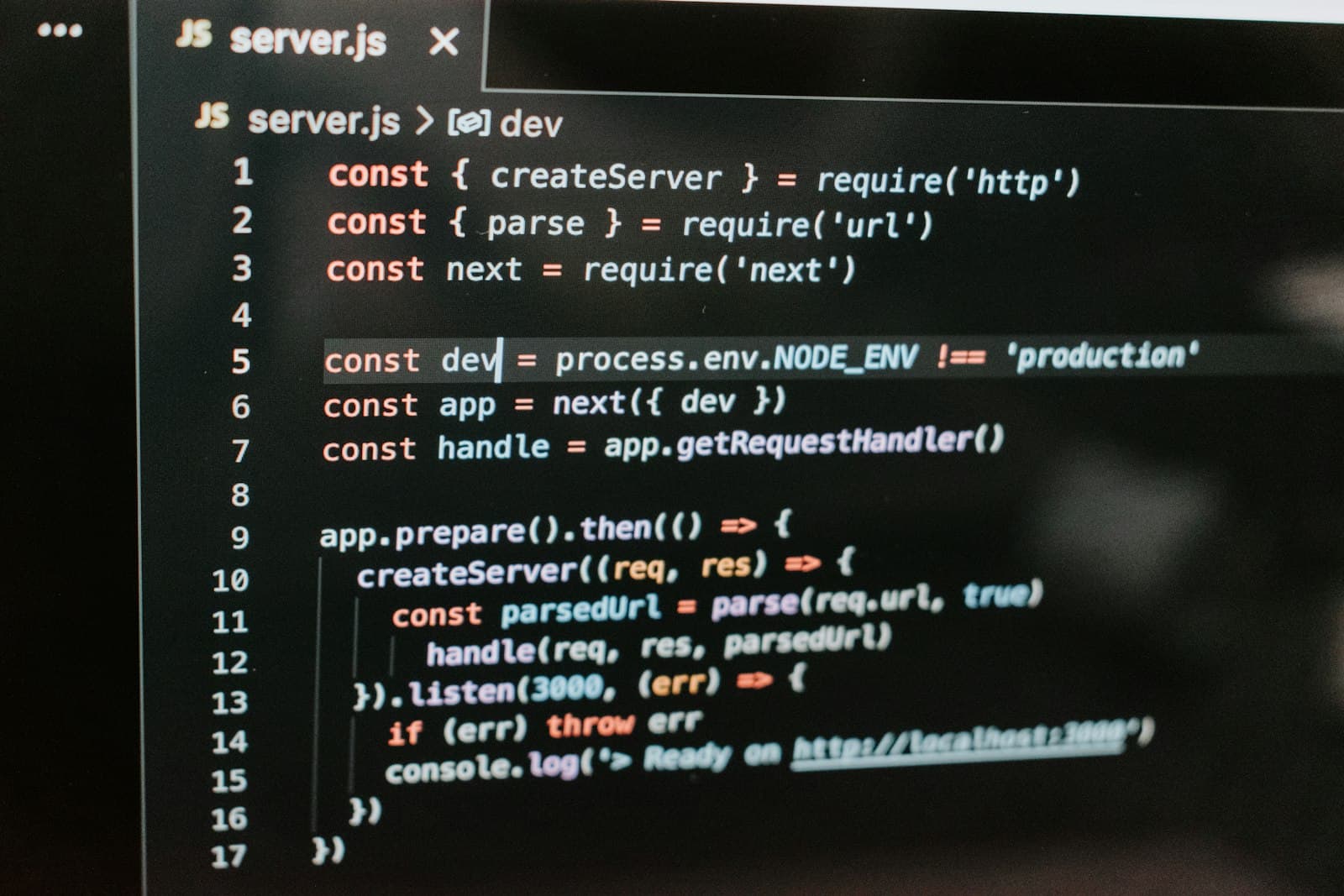

A few months ago, I was debugging a small Node.js issue that should have taken five minutes. Instead, I opened about 10 Stack Overflow tabs, skimmed documentation, tried two different fixes, and still wasn't confident the solution was correct. That's when I realized something: a lot of my development time wasn't spent writing code. It was spent searching for answers.

Since then, AI tools have become part of my daily workflow. Not as a replacement for thinking, but as a developer assistant that helps me move faster. In this post, I'll share how I actually use AI while coding, the tools I rely on, and a few prompt techniques that consistently work well.

AI output is a starting point, not production-ready code. Everything still needs to be reviewed and tested.

Why I Started Using AI for Development

The biggest benefit of AI in development is simple: it removes friction. There are many small moments while coding where you lose time:

forgetting syntax

looking for an example of a library

writing repetitive boilerplate

debugging something trivial

Normally, this means searching documentation or opening multiple forum threads. With AI, I can often get a working example immediately, which helps me focus on solving the real problem instead of hunting for information.

That said, I follow one important rule: AI output is a starting point, not production-ready code. Everything still needs to be reviewed and tested.

Tools I Use

Two tool types currently play the biggest role in my workflow: inline/code-aware suggestions in the editor, and chat-style assistants for explanations and small design decisions.

Editor extensions (quick inline help)

GitHub Copilot: great for small completions, boilerplate, and autocomplete inside VS Code.

Tabnine / Codeium: fast, often lower-latency completions for common patterns.

Chat assistants (conversations and context)

ChatGPT (or similar): for explaining errors, generating minimal reproducible examples, and creating tests or migration snippets.

Built-in IDE chat (when available): keeps context local to the repo and speeds up iterative fixes.

Local / enterprise models

- Self-hosted LLMs or private model endpoints when working with sensitive code or closed-source corpuses.

Supporting tools

Linters and formatters (ESLint, Prettier) to keep AI-generated code consistent with project style.

Test frameworks (Jest, Mocha) and E2E tools (Playwright) to verify changes quickly.

Snippet managers and PR templates to speed up review/merge tasks.

When to pick which: use editor extensions for small lines or glue code; use chat assistants when you need explanations, refactors, or to generate unit tests and alternatives.

Real Before/After Examples

Below are real, minimal examples that I’ve used as teaching material in my own posts and internal docs. For each: the problem, the prompt I used, the before code, the AI suggestion summary, the after code, why it works, and how I verified it.

Example 1 — Missing await / unhandled rejection (Mongoose-style save)

Prompt I used: I have this Express handler — it sometimes causes unhandled promise rejections and downstream code reads stale data. Suggest a minimal fix, explain briefly, and add proper error handling.

Before

// routes/users.js

app.post('/users', async (req, res) => {

const user = new User(req.body);

user.save(); // missing await

res.status(201).json({ id: user._id });

});

AI suggestion (summary)

- Await the save() call and wrap in try/catch so errors are caught and the response is sent only after the save completes.

After

// routes/users.js

app.post('/users', async (req, res) => {

try {

const user = new User(req.body);

await user.save(); // wait for DB write to finish

res.status(201).json({ id: user._id });

} catch (err) {

console.error('Failed to create user', err);

res.status(500).json({ error: 'Could not create user' });

}

});

Why this works

awaitensures the DB operation finishes (and any errors surface) before sending a response.try/catchprevents unhandled promise rejections and gives a clear error response for callers.

How I verified

- Hit the endpoint with valid and invalid payloads; confirmed response status codes and no unhandled rejection logs. Also ran a quick integration test for DB write/read.

Example 2 — Missing return after early response (headers already sent)

Prompt I used: This Express route sends a 400 on missing input but crashes with "Cannot set headers after they are sent." Show a minimal fix and explain.

Before

app.post('/login', async (req, res) => {

if (!req.body.email) {

res.status(400).send('Missing email');

}

const user = await findUserByEmail(req.body.email);

// further processing that may call res.json()

res.json({ id: user.id });

});

AI suggestion (summary)

- Return immediately after sending the error response (or use an else), so the rest of the handler doesn't execute.

After

app.post('/login', async (req, res) => {

if (!req.body.email) {

return res.status(400).send('Missing email'); // return stops execution

}

try {

const user = await findUserByEmail(req.body.email);

if (!user) return res.status(404).send('User not found');

res.json({ id: user.id });

} catch (err) {

console.error(err);

res.status(500).send('Server error');

}

});

Why this works

- Returning after

res.*ensures the handler stops; addingtry/catchand additional guards prevents multiple responses and handles errors gracefully.

How I verified

- Ran tests for missing email and nonexistent user; no header errors and correct status codes returned.

Example 3 — Using async map without Promise.all (array of Promises)

Prompt I used: I map over an array and call an async function inside map, but I see wrong behavior because the responses are not awaited correctly. Suggest a minimal fix and explain.

Before

async function fetchAll(ids) {

// getDataForId returns a Promise

return ids.map(id => getDataForId(id)); // returns array of promises, not awaited

}

const results = await fetchAll([1,2,3]);

console.log(results); // unexpected: array of unresolved promises

AI suggestion (summary)

- Use

Promise.allto await all Promises returned bymap. Alternatively, usefor...ofwithawaitif you need sequential execution.

After (parallel)

async function fetchAll(ids) {

const promises = ids.map(id => getDataForId(id));

return Promise.all(promises); // waits for all promises to resolve

}

const results = await fetchAll([1,2,3]);

console.log(results); // array of resolved values

After (sequential, if order/ratelimiting matters)

async function fetchAllSequential(ids) {

const results = [];

for (const id of ids) {

results.push(await getDataForId(id)); // sequential await

}

return results;

}

Why this works

mapreturns an array of Promises;Promise.allconverts that into a single Promise that resolves when all inner Promises resolve. Use sequentialawaitwhen you need ordering or rate-limiting.

How I verified

- Added a mock

getDataForIdwith delays and confirmedfetchAllwaits for all results; compared timings between parallel and sequential versions.

Prompt Techniques & Patterns That Work for Me

A few patterns I repeatedly use to get useful, concise results from chat assistants:

Be explicit about what you want:

Bad: "Fix this bug"

Better: "This Express handler throws X when Y. Return a minimal fix that preserves the existing API and add a Jest unit test that covers success and the error case."

Ask for three things in order: the fix, a short explanation, and a test/example.

Example prompt:

I have this Node.js function: [paste]. It throws "X". Provide (1) a minimal code fix limited to this file, (2) a 2-sentence explanation of root cause, and (3) a Jest unit test.

Use constraints to limit scope:

"Keep changes to a single file"

"Prefer built-in Node.js APIs"

"Explain in 2–3 sentences"

Request alternatives and trade-offs:

- "Give me two different fixes and list the trade-offs (performance, complexity, compatibility)."

Ask for verification steps:

- "Give the minimal steps I can run locally to verify this fix, including example curl commands or test commands."

When generating tests:

Ask for positive, negative, and edge-case tests.

Ask for mocks/stubs for external dependencies.

Verification & Testing Checklist

Before merging any AI-assisted change, I run through this checklist:

Does the change have unit tests that cover expected and error cases?

Do linters/formatters (ESLint, Prettier) pass?

Is behavior covered by integration/E2E tests if it touches external systems?

Any new dependencies? Check license and security implications.

Any secrets accidentally exposed in the prompt or AI output (API keys, internal URLs)? Remove and rotate if needed.

Manual review — ensure readability and alignment with project architecture.

Run CI pipeline locally where possible.

Automate as much of this as you can (pre-commit hooks, CI checks).

Caveats & Ethics

AI is helpful, but it has limitations:

Hallucinations: models can invent APIs, return incorrect code, or make up package names. Always verify.

Licensing: code examples may be influenced by training data. Be cautious when copying complex implementations verbatim into proprietary codebases.

Secrets: never paste API keys, private tokens, or sensitive internal code into public chat services. Prefer local/private models for sensitive work.

Overreliance: AI speeds up many tasks but don't skip core practices like code review, tests, and security audits.

Attribution: attribute examples where appropriate, and confirm license compatibility of any third-party snippets.

Practical Setup Tips

Configure your editor and team with shared settings for linters and formatters so AI-generated code matches style.

Create prompt templates in a snippet manager for common tasks (bug fixes, tests, refactors).

Use small, focused prompts and iterate — give the AI the minimal reproducible example.

Keep a changelog of AI-assisted fixes for future reference and learning.

Conclusion

AI hasn't replaced my judgment, but it has removed the busywork that ate minutes and hours every day. The result: more time to think about architecture, design, and the tricky parts of problems that actually require human insight.

If you want to apply this to your workflow:

Start small: use AI for tests and tiny bug fixes first.

Build a short prompt template for your team.

Add verification steps in CI to guard against hallucinations and regressions.